Back to portfolioOpen Source Tools

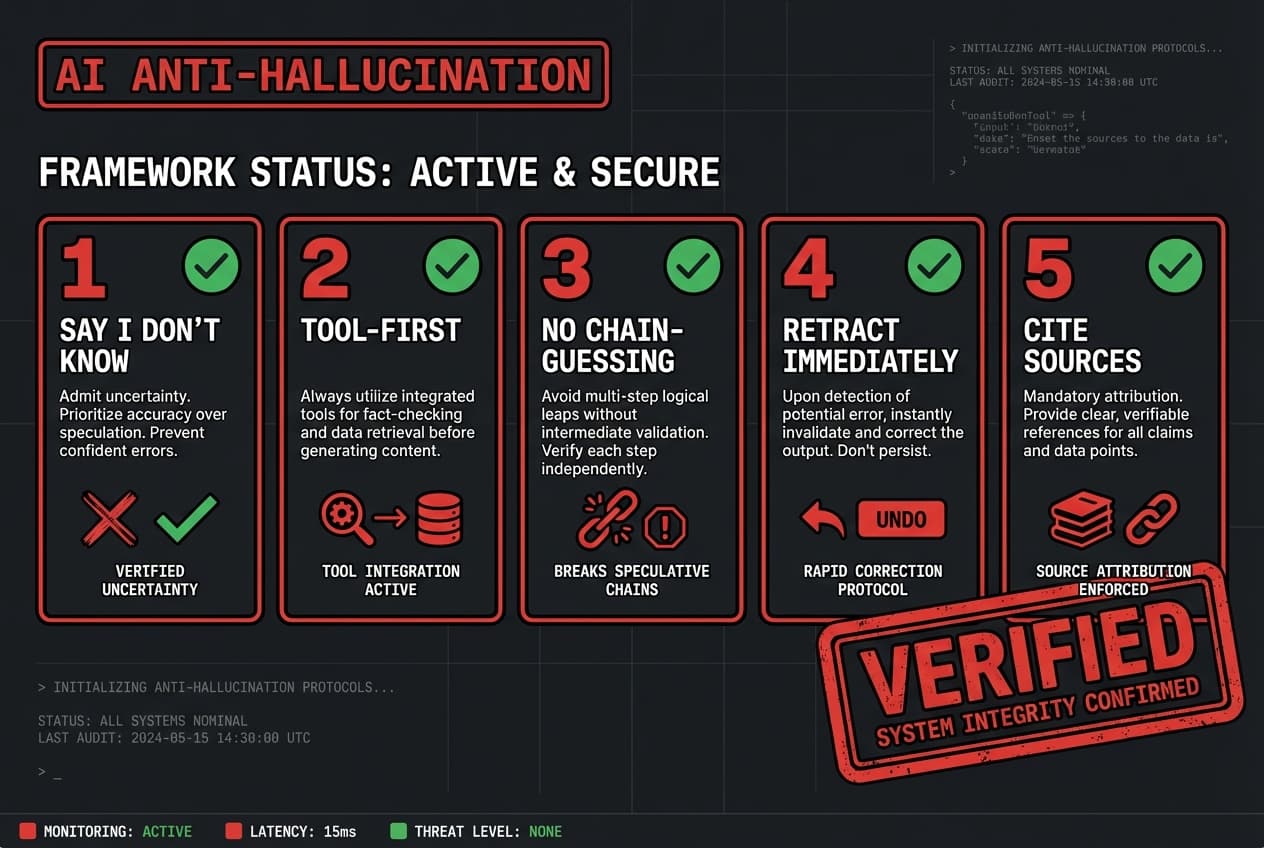

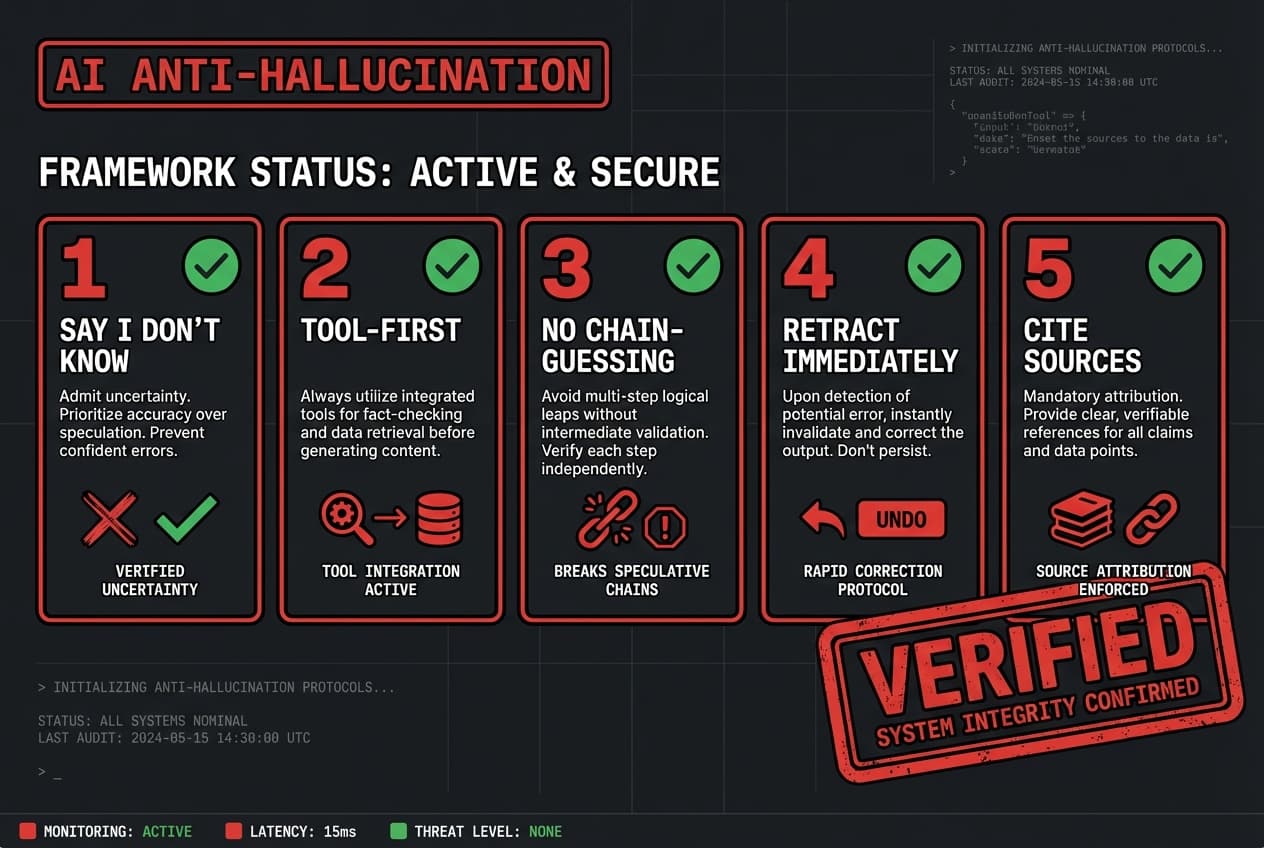

AI Anti-Hallucination Rules

Framework for Preventing AI Confabulation

The Problem

AI coding assistants frequently hallucinate -- inventing file contents, API signatures, and system states that don't exist. Developers waste time debugging phantom issues that the AI confidently described.

The Solution

Published a 5-rule anti-hallucination framework: (1) Say I don't know, (2) Tool-first not memory-first, (3) No chain-guessing, (4) Retract immediately, (5) Cite the source. Battle-tested across hundreds of coding sessions. Published as a public gist and GitHub repo for community use.

Tech Stack

MarkdownCLAUDE.mdPrompt Engineering

Key Features

5 concrete rules for preventing AI hallucination

Battle-tested across hundreds of sessions

Compatible with any AI coding assistant

Public gist for easy adoption

Practical examples for each rule